Cobalt, introduced in New Kingdom times, almost disappears as a constituent of faience in the Third Intermediate period (Kaczmarczyk and Hedges 1983, 259), at a time when there is little evidence of glass production. Several possibilities have been proposed for cobalt's short time-span of use. Blom-Böer (1994, 62; Lee and Quirke 2000) suggested that it may be linked to a connection between cobalt blue and the sun-cult as interpreted in the reign of Akhenaten. Lee and Quirke (2000, 111) noted that, apart from the Predynastic period, pottery was commonly painted only in the late 18th to early 19th Dynasties, and therefore the absence of cobalt as a pigment in other periods might relate more to the choice of surface than to the availability of the mineral within Egypt. To some extent, this is something substantiated by its presence on pottery of the Amarna period but not on the so-called talalat blocks in temples or the temple chapel wall reliefs of the same period (Lee and Quirke 2000, 111). Limits on accessibility have also been considered by questioning the cobalt source itself. However, this suggests that the cobalt source is known. Although generally accepted to derive from the Kharga Oasis (e.g. Rehren 2001), a central European provenance has also been proposed as there seems to have been more intensive Aegean-Egyptian contacts – either direct or via Syria – during the reigns of Amenhotep III and Akhenaten in the late 18th Dynasty, thereby providing the trading network for a short-lived cobalt supply from the far side of the Balkans (Lee and Quirke 2000, 111). However, there are also other locations from where cobalt could have been derived. One of these could be the Anarak district of central Iran, which not only has deposits with the same suite of elements found in cobalt-blue glasses, but has Ni and Co in close association owing to its mineralisation (i.e. skutterudite) and also has variability in its associated minerals. This explains why the relationship between NiO and CoO prevails for both Egyptian and Mesopotamian cobalt-blue glasses but only Egyptian glass exhibits linearity between CoO and MnO and ZnO. Despite these differing speculations, what is clear is that the appearance and disappearance of glass and cobalt seem chronologically linked, at least in Egypt.

The re-analyses of the glass data from the 18th and 19th-20th Dynasties show quite convincingly that a lot of glass was recycled. This is particularly noticeable in the Ramesside period, suggesting that glass was no longer being produced. This recycling was probably specialised. For example, despite some contamination, glass appears to have been separated by colour before re-melting. Recycling also appears to be accompanied with dilutions using a base glass, but only to the point where the deep blue colour was unaffected. This may have been common practice, although by the 19th-20th Dynasties most glass seems to have been mixed, which suggests that dilutions may have been through necessity in the later Ramesside period. This necessity appears to coincide with the disappearance of cobalt, not only for glass but for other materials, suggesting that the full suite of colours was a prerequisite for the glass-making to flourish. In other words, without the basic colour palette, glass was no longer considered a valuable commodity. This is supported when it is considered that blue was the colour of supplication and 'lapis-like' was a metaphor for great riches in both Egypt and Mesopotamia (Wengrow 2018, 32-40), with lapis lazuli itself having travelled along ancient trade routes between Afghanistan and the Mediterranean from the 3rd millennium BC.

The reasons behind the decrease in the appearance of cobalt glass after 1250 BC are still debated. However, from the re-analyses presented above, it appears that it is unrelated to the alum sources of Egypt's Western Desert. The elevated alumina levels of cobalt-blue glass, which for so long has been used to identify the Dakhla and Kharga oases as the source of Egyptian cobalt, can be better explained by the presence of compounds in the glass associated with igneous rocks. Igneous rocks are not unique to Egypt, neither is the titania that is found in association with the alumina. However, titania has not been detected in the alum sources. Furthermore, the low levels of K2O, which have also been used as a discriminator for cobalt-blue glass, can be explained through the addition of a vitreous cobalt-rich frit with low soda, high silica and low K2O being added to local plant-ash fluxed base glasses.

This opens up the debate about where the cobalt used in Egyptian and Mesopotamian glass derived, or more precisely where and how the cobalt frit was produced that was subsequently used as a concentrated vitreous colourant to produce cobalt-blue glass.

The compositional re-analyses of both cobalt-blue glass and cobalt-blue frit provide some indication of potential cobalt sources. Nickel, zinc, and manganese are potentially found with the cobalt, along with compounds associated with aluminium, titanium, magnesium, potassium and silicon. Concentrations of sulphur and arsenic found in the glasses and frit suggest that some of these elements were in the form of arsenides and sulphides. This suggests Co-Ni-As mineralisation within a siliceous igneous rock that contains alumina, titania, magnesia and potassium oxide. Such mineralisation is often associated with metallic bismuth, native silver and sometimes uranium in what are known as five-element ores, with cobalt arsenide minerals often being associated with sphalerite (ZnS).

It is likely that interest in the five-ore system was for its visible but dispersed silver concentration rather than directly for cobalt. It should be noted that connections between silver and cobalt have been made before. Although criticised (Kaczmarczyk and Hedges 1983), the work of Dayton (1981; 1993, 38) attempted to link the silver-bearing cobalt ores of Erzgebirge on the Czech-Germany border (i.e. the far side of the Balkans) with Egyptian and Mycenaean glass. The criticism, however, was perhaps directed at how the connection was presented based on the limited analyses conducted rather than the fact that silver and cobalt are intimately associated in these ore deposits. Nevertheless, to quote Nikita and Henderson (2006, 76) 'The information on the provenance of cobalt in Mycenaean blue provided by Dayton is confusing at best and inaccurate at worst'. This criticism, of course, does not provide any validation for Egypt's Western desert as the source for cobalt.

To retrieve the silver from five-element ores a flux, such as soda, would need to be added to the ore charge in order to melt the siliceous rock, similar to the way glass was used to extract metals from associated minerals in 7th-century AD Islamic contexts in West Africa (Rehren and Nixon 2014). Silica was probably added when it was noticed that the glassy phase produced was coloured blue. This scenario would explain the close compositional variation between Na2O and SiO2 found in the frit found at Amarna. The experimental work presented in Section 5 shows that a five-element ore can be fluxed in order to retrieve the silver and produce a concentrated cobalt-blue glass slag. It was also shown that copper partitioned preferentially into the metallic phase, potentially the reason for the low association that copper has with the other components in cobalt-blue frit and glass. It is proposed that this slag, the by-product of the system, was in effect a valuable and traded commodity. However, this also suggests that its availability to the glass-makers was determined directly by the accessibility to and availability of the silver-bearing ores.

This leads us to question why such silver-cobalt sources would suddenly become unavailable. It is here that we need to consider how, where and when silver was exploited in antiquity. Native silver or minerals concentrated enough to be smelted directly only occur in very limited quantities (Patterson 1972) and were probably rare even in antiquity (Forbes 1950, 169-230). This has led many researchers to assume that silver was derived from argentiferous lead, such as cerussite or galena, from very early on. Meyers (2003), however, developed a model that attempted to describe the trajectory of use of silver-based ores. This was based primarily on the empirical observation that gold levels are generally much lower in galena than in cerussite owing to the gold content concentrating in the oxidised ores, i.e. cerussite. The model suggested that objects analysed having a gold content greater than 0.1% derive potentially from cerussite rather than galena, allowing transitions in the technologies to exploit silver-bearing ores to be identified. The transitions proposed were that silver ores (e.g. cerargyrite) were exploited first followed by oxidised lead ores (e.g. cerussite) and, finally, the primary sulphide ores (e.g. galena), in line with the expectation that near-surface deposits would be the first to be exploited.

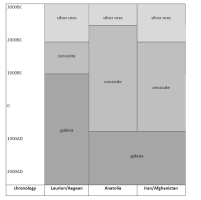

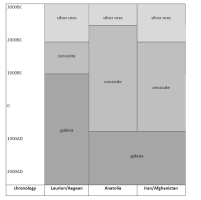

According to the Meyers' model (Figure 37), the first transition (native silver and dry silver ores to oxidised argentiferous lead ores such as cerussite) took place in the 3rd millennium BC for the regions of Laurion/Aegean, Anatolia and Iran/Afghanistan while the second transition (oxidised argentiferous lead ores to primary ores such as galena) took place in about 1000 BC for Laurion/Aegean but not until the Arab conquest (c. 700 AD) for Anatolia and Iran/Afghanistan. Regardless of whether this tentative timeline is accurate, what it demonstrates is that by the 1st millennium BC virtually all silver must have come from ores containing only a few thousand parts per million at most (Craddock 2014).

As well as suggesting that surface deposits were more likely to be exploited earlier, the model also implies that extracting silver from native silver and siliceous silver ores is relatively straightforward compared to the extraction of silver from argentiferous lead, which was in effect the first time that trace amounts of one metal were separated from another. On first inspection, this would seem to be true, with the process of cupellation, by which the argentiferous lead was subjected to an oxidising blast at around 1000°C, oxidising the lead to litharge (PbO) but leaving small quantities of silver as a separate metal phase, being a more complex operation than direct melting or smelting. Although evidence of this operation has been found from 3300 BC (Pernicka et al. 1998), the issue here is not when argentiferous lead ores were first exploited, but that certain ores were exhausted, thereby reducing the number of feasible methods to acquire silver.

As mentioned earlier, Smith (1967) argued that the earliest silver objects were made of native metal or of cerargyrite, both of which form silver on melting under a cover of charcoal. Such a method is true for relatively pure silver ores containing large crystals of the silver mineral. However, native silver and siliceous ores are not only present as wires and large crystals but also as plates over quartz, as dendrites inside quartz fissures, and as grains, scales and as tiny groups of crystals within volcanic basalt minerals such as plagioclase (NaAlSi3O8 – CaAl2Si2O8), i.e. minerals with appreciable alumina concentrations. It is well known that native silver, for example, is often mixed with quartz (Bastin 1922). This suggests that even though the silver may be visible to the naked eye, unless it is possible to separate out by mechanical means (e.g. concentrating by selecting the purest ores and/or by crushing and milling to extract silver or silver minerals), extraction requires the removal of the surrounding siliceous matrix. This requires smelting the ores at high temperatures in a furnace with a flux to reduce the melting point of the silica matrix, i.e. silver is unlikely to be extracted completely from the host mineral over a simple charcoal fire.

In effect, this suggests another early transition is required in Meyers' model: once the relatively pure and mechanically refineable silver minerals had been exhausted, there would have been a need to extract potentially visible silver/silver minerals that were embedded and dispersed within a siliceous matrix. To place this transition onto the timeline mentioned above, it would have been most prodigiously applied after the exhaustion of native silver and easily accessible dry silver ores, and before the purposeful exploitation of argentiferous lead-bearing ores such as cerussite and galena. In other words, it probably occurred around the time that cerussite began to be exploited, reflecting the need to employ more complicated processes to maintain the demand for silver, i.e. around the middle of the 2nd millennium. Moreover, considering that it later became more judicious to exploit cerussite and galena, suggests that the exploitation of these increasingly difficult to exploit native and dry silver sources had a limited time-frame.

Evidence for the use of native silver or siliceous silver ores is generally indirect. As described above, Meyers uses gold levels as a proxy to assign silver to silver ore types. However, it should be noted that despite the limited amounts of Egyptian silver (and the even fewer analyses that record elements other than silver, gold and copper), early Egyptian silver is often effectively lead-free (Mishara and Meyers 1974, 29-45). This could suggest that some silver, deriving from the five-element ore deposits, travelled with the cobalt. Despite low lead levels (i.e. most Egyptian objects on the Oxalid database have <0.1%Pb; Mishara and Meyers 1974, 29-45), the low gold levels have led to the suggestion that Egyptian silver that was not from an electrum source must have derived from argentiferous galena (e.g. Gale and Stos-Gale 1981a; 1981b). However, if the data are taken at face value, the absence of lead is more indicative of native silver or silver-bearing ores that do not contain lead, unless the cupellation process was so sophisticated as to remove all the lead (as it may have been in later periods). As mentioned earlier, the silver from Early Bronze Age silver objects recovered at Ur were interpreted to have originated from a fahlore (Salzmann et al. 2016), similar to the mineralisation found at five-element deposits in central Iran, objects that suggested a silver, copper, lead and zinc mineralisation, as well as traces of cobalt. However, this silver is still viewed as a product of cupellation despite lead levels being as low as 0.1% for some samples (Salzmann et al. 2016). Furthermore, in Egypt there is very little evidence of any metal being added deliberately to silver at this time. The copper concentration of ancient Egyptian silver has levels below that required to improve the mechanical properties (<5%) until the Late period. This suggests that silver was not intentionally alloyed at all, which along with the absence of lead could be interpreted as deliberate avoidance of contaminating silver (Ransom Williams 1924, 28-30), in a similar fashion to how tin-bronze was resisted in Egypt until the 2nd millennium BC and, even at that time, was accorded a lower value to pure (unalloyed) copper (Wengrow 2018, 94). There is also no evidence that Egypt went through the stage of using coarse alloys of silver and lead as found on Cyprus (about 2000-1500 BC) (Ransom Williams 1924), which could suggest that silver used in Egypt derived from native silver or siliceous silver ores throughout the New Kingdom period. Moreover, lead isotope analyses show that some Egyptian yellow and red glass is consistent with Mesopotamian glass from Tell Brak and Tell Rimah, glass from Susa and ores, litharge and slag from Iran, as well as silver recovered in Egypt, Sidon, Ur and Syria, copper and lead objects recovered at Amarna and green frit from El Rakham in Egypt (Figure 28). Thus, silver could have travelled from Iran to Egypt along with yellow glass, cobalt-blue glass and frit, perhaps using similar trade routes to those for lapis lazuli from Afghanistan.

In essence, the arguments presented above suggest that the exhaustion of easily accessible silver provoked a need to exploit native silver and silver minerals in visible but less accessible sources. This resulted in the production of cobalt-blue frit as a by-product of this extraction process. This vitreous concentrated colourant was used to colour base glasses across the ancient world, until even these less accessible silver sources became depleted. The demand for silver was maintained by exploiting argentiferous lead ores and eventually jarosite from south-west Iberia. New sources of cobalt, however, were not utilised for glass for another five hundred years.

Internet Archaeology is an open access journal based in the Department of Archaeology, University of York. Except where otherwise noted, content from this work may be used under the terms of the Creative Commons Attribution 3.0 (CC BY) Unported licence, which permits unrestricted use, distribution, and reproduction in any medium, provided that attribution to the author(s), the title of the work, the Internet Archaeology journal and the relevant URL/DOI are given.

Terms and Conditions | Legal Statements | Privacy Policy | Cookies Policy | Citing Internet Archaeology

Internet Archaeology content is preserved for the long term with the Archaeology Data Service (ROR). Help sustain and support open access publication by donating to our Open Access Archaeology Fund.